|

Now that we have a list of words we need a way of reading this list into postgres so we can generate our data. In order to create realistic data we need a list of words, on Linux you can grab the word list generated by the spell checker utility on /usr/share/dict/, the name of the file varies depending on the distribution, if you're not on linux you can grab your list of words here

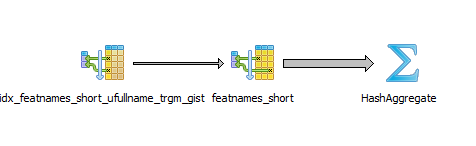

Won't use our index, if you wanna to know more about indexes you can read this On top of that it can speed up LIKE, ILIKE, and with trigram indexes, a new index type added by the extension. Its operational and conceptual overhead is much lower than that of PostgreSQL full-text search or a separate search engine. The first thing and maybe the most important part when dealing with indexes or any code for that matter is being able to test it, you need to play around with it to figure out what is the best index for your specific query, to test indexes we need a lot of data otherwise the query planner pgtrgm is a PostgreSQL extension providing simple fuzzy string matching. To use, there's a clever way of doing this on linux, if you're not on linux you can download GitLab team members can monitor slow or canceled queries on GitLab.The first thing we need to do is to create a lot of fake data so we can test our indexex, it's also important to use real words dependending on the index that you're trying This can significantly impact overall performance,Įspecially if more than 64 live subtransactions are being used inside a single transaction. create_or_find_by can save us a SELECT.īoth methods use subtransactions internally if executed within the context ofĪn existing transaction. Want to avoid duplicate records to be inserted on edge cases However, if the more common path is to create a new record, and we only Have a single record which is reused after it has first been created. safe_find_or_create_by if the common path is that we Increment the primary key sequence (if any), among other downsides. If the INSERT fails, it will leave a dead tuple around and safe_find_or_create_by methodsīecause it performs the INSERT, and then performs the SELECT commands only if that call To be able to use this method, make sure the model you want to use To solve this we've added the ApplicationRecord.safe_find_or_create_by.įind_or_create_by, but it wraps the call in a new transaction (or a subtransaction) and Using transactions does not solve this problem. This may not beĭesired, or may cause one of the queries to fail due to a constraint Which may lead to trying to insert two similar records. With concurrent processes in mind, there is a race condition It first runs a SELECT, and if there are no results an INSERT is first_or_create and others is that they are not atomic. SELECT * FROM projects WHERE EXISTS ( SELECT 1 FROM users WHERE projects. In Rails you have to use this by creating SQL fragments: PostgreSQL can optimise WHERE IN quite well there are also many cases where Recommended to use WHERE EXISTS whenever possible. While WHERE IN and WHERE EXISTS can be used to produce the same data it is This also means there's no need to add an index onĬreated_at to ensure consistent performance as id is already indexed byĭefault. Unique and incremented in the order that rows are created, doing so will produce theĮxact same results. When ordering records based on the time they were created, you can orderīy the id column instead of ordering by created_at. where ( id: ) # uses SELECT users.* User. where ( id: ) # selects the columns explicitly scope2 = User. cached_column_names # The helper returns fully qualified (lumn) column names (Arel) scope1 = User.

# Good, the SELECT columns are consistent columns = User. Value or the name of the namespace contains a value most people would write Quickly deteriorate as the data involved grows.įor example, if you want to get a list of projects where the name contains a

Get related data or data based on certain criteria, but JOIN performance can UNIONs aren't very commonly used in most Rails applications but they're very These should be isolated from application code, they should continue to subclass This allows helper methods to be easilyĪn exception to this rule exists for models created in database migrations. Most models in the GitLab codebase should inherit from ApplicationRecord, MAX_PLUCK defaults to 1_000 in ApplicationRecord.

If you have strong reasons to use pluck, it could make sense to limit the number In the latter, you should add a small helper method to the relevant model. Pluck(:id) or pluck(:user_id) within model code. In line with our CodeReuse/ActiveRecord cop, you should only use forms like You should ask yourself "Can I not just use a sub-query?". The values in Ruby itself (for example, writing them to a file). The only time you should use pluck is when you actually need to operate on

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed